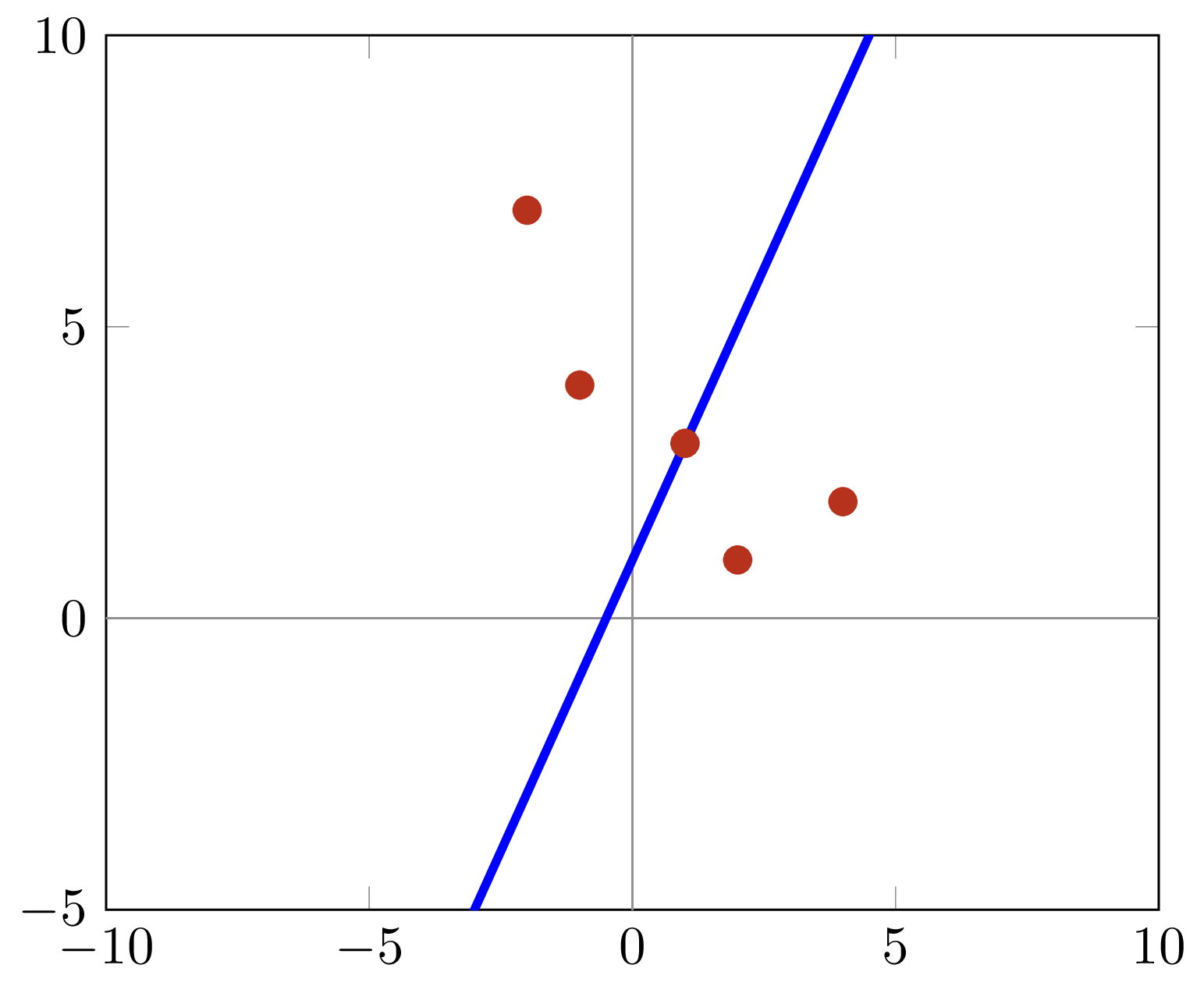

I would advice you to read up on linear regression and the assumptions behind. 1 Answer Sorted by: 0 Try the following: res linregress (pupVals, defVals) SlopeFit np.array ( res.intercept, res.slope) errs np.array ( res.stderr,interceptstderr) CIS SlopeFit :,None + 1.96errs :,Nonenp. Certain assumptions/requirements must be met before you can be sure that the estimated coefficients correctly describes the true underlying relationship. The most common type of linear regression is a least-squares fit, which can fit both lines and polynomials, among other linear models. normvect mat2gray (vector) This function is used to convert matrix into an image, but works well if you don't want to write yours. Linear regression fits a data model that is linear in the model coefficients. If you want to normalize it between 0 and 1, you could use mat2gray function (assuming 'vector' as your list of variables). Why is both the function giving different outputs. b robustfit(X,Y) uses robust linear regression to fit Y as a function of the columns. A data model explicitly describes a relationship between predictor and response variables. It's important to know that a regression will not generate these "correct" estimates in all cases. There are two commands in Matlab for doing multiple linear regression. This MATLAB function returns the estimated coefficients for a multivariate normal regression of the d-dimensional responses in Y on the design matrices in X. Thus, these coefficients can be seen as the denoised relationship between your signal and the random variables. With a large enough sample you can via a regression, as stated, estimate the linear relationships in your data (in the above case 0.5 and 0.7). Functional response regression can be performed to regress Yi(t) on Xcanceri ( 1 if from cancer patient, 0 if control) using Equation 9 and then assessing. You are kind of right in your thinking though. These coefficients will be returned in the vector you call w. The resulting third-order regression is shown in Fig. An example of a program which can be used to do this is given in Appendix C. Also, plot the solution for the line over the previously plotted data set in MATLAB. Here is an example of data you could simulate where you can find a linear relationship (via a regression) between your signal and some variables: signal = 0.5 X randomvariable + 0.7 X randomvariable2 + randomnoisetermĭoing a regression on this on a large enough sample would yield coefficients of approximately 0.5 and 0.7. coefficient values achieved from the MATLAB solution to those found using Excel. We regress Y on these basis functions, which yields the expression EYS. This means that you can't find a relationship between the signal and the variable. Let's assume that the price of the stock is S(t) and the payoff function is.

Linear regression techniques are used to create a linear model. It can help you understand and predict the behavior of complex systems or analyze experimental, financial, and biological data. As your simulated signal does not have any constant parameter attached to some stochastic (random) variable, there is no linear relationship in your data. Linear regression is a statistical modeling technique used to describe a continuous response variable as a function of one or more predictor variables.

To compute coefficient estimates for a model with a constant term (intercept), include a column of ones in the matrix X. Let me try to explain to you why this is not what you are doing in your code.įirst of all, a regression is not a tool to denoise something, but a tool to describe/estimate (linear) relationships between data/variables. Description example b regress (y,X) returns a vector b of coefficient estimates for a multiple linear regression of the responses in vector y on the predictors in matrix X. Then given that $m$, a linear regression of $y$ on $|x-m|$ gives a good $a$ and $b$.If I understand your question correctly, you are trying to filter out the noise from the net_signal. We know consider the case when the mean is a function of another. So calculating that for a quadratic fit (in $x$) to $y^*=(y-\min(y))^2$, should give a good estimate of $m$.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed